Author Romain Dumont

Category Reverse-Engineering

Tags Microsoft, Windows, kernel, Defender, reverse-engineering, 2021

This article describes how Windows Defender implements its network inspection feature inside the kernel through the use of WFP (Windows Filtering Platform), how the device object’s security descriptor protects it from being exposed to potential vulnerabilities and details some bugs I found. As a complement to this post, a small utility is released to test the different bugs.

The interest

Just like any reverse engineer, understanding how things operate is interesting in itself and closed-source software makes it even more exciting. Windows evolved a lot and antivirus software need to keep up with it. With Patchguard around and given how the operating system has become so focused on security, kernel hooks have mostly disappeared. However, Windows provides a wide variety of ways to collect information on the objects (processes, threads, files, registry…) such as notification callbacks, filters, events… One can wonder how AV drivers collect information and what kind of information they gather. It made sense to take a look at Windows Defender since it would probably rely on all the latest features Windows has to offer. Finally, the blog series of n4r1b on Windows Defender kernel components such as WdFilter and WdBoot were really interesting and inviting to contribute to the research on such components.

A driver based on WFP

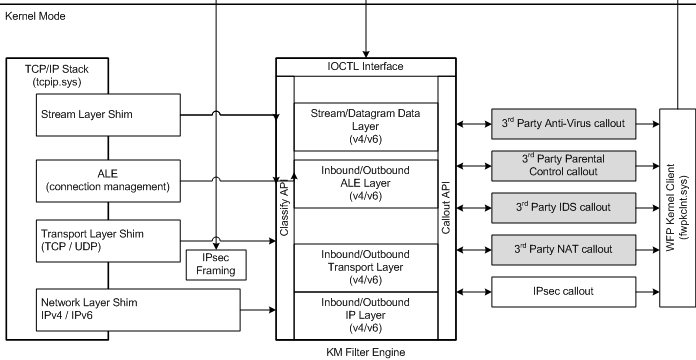

The Windows Filtering Platform allows to set filters at different layers of the network stack and provides a rich set of features to interact with the traffic: data tampering, injection, applying policies, redirection, auditing…

The MSDN page About Windows Filtering Platform extensively describes all its features and how it operates.

Filtering concept

The file version of the driver described here is 4.18.2102.3-0.

As hinted previously, the network inspection driver, WdNisDrv relies heavily on the WFP model. The architecture is quite complex but basically a driver would need to register filters on a specific layer or sub-layer, specify the filtering conditions and then provide a set of callbacks for that filter called a callout.

It is possible to dump the different filters that are currently configured on the system by issuing the following command:

netsh wfp show filters

It will output a very verbose XML file containing the filters, callouts, conditions, layers… in place on the system.

For a comprehensive list of layers that the packets go through while traversing the network stack, the page TCP Packet Flows can be consulted. It conveniently maps the TCP flags with the layers when establishing a connection. The UDP version is also available.

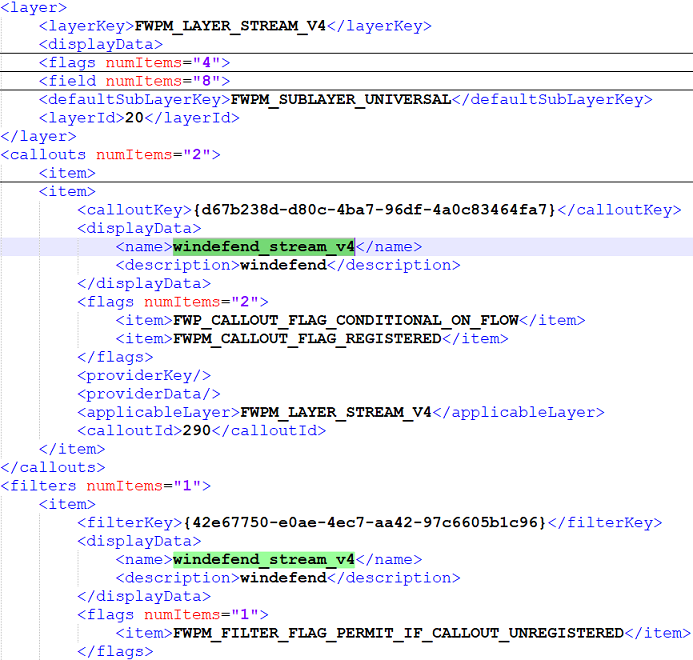

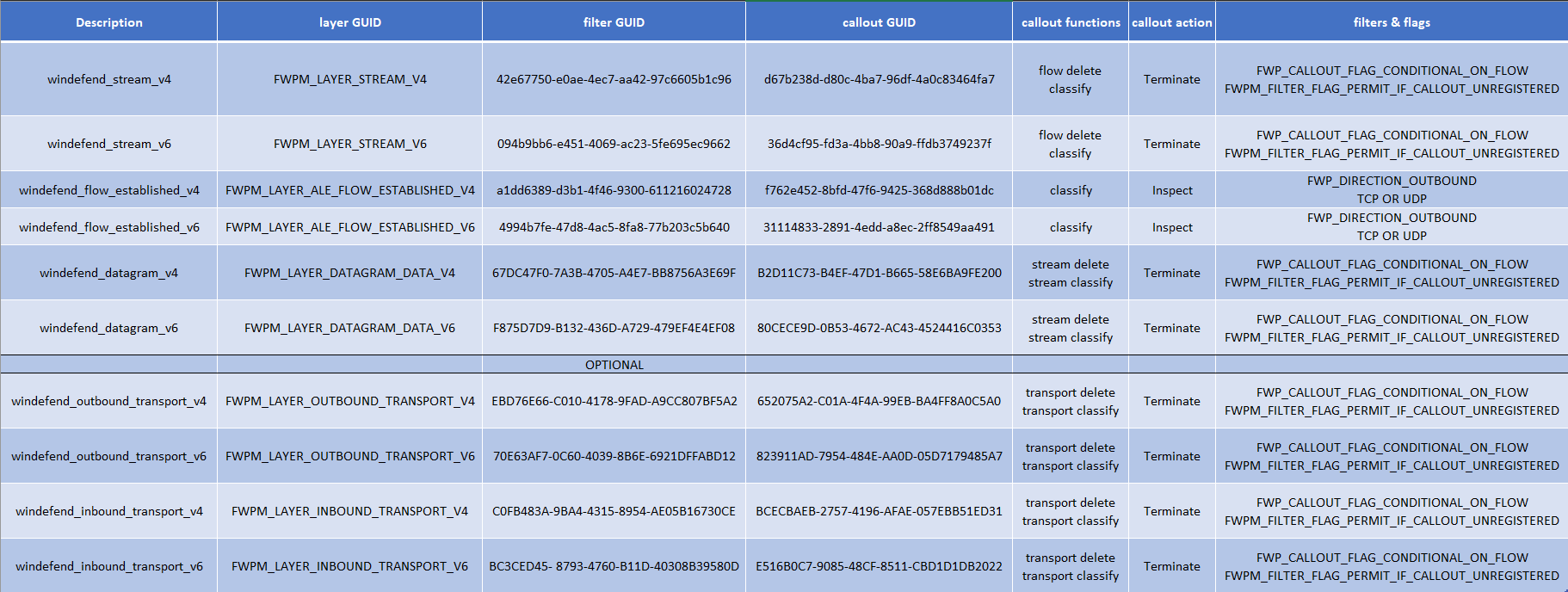

When searching for the word “windefend” in the XML file, one can retrieve the configuration for the different layers. For instance, when looking at the layer FWPM_LAYER_STREAM_V4, one can learn the callout windefend_stream_v4 is associated and registered.

The flag FWP_CALLOUT_FLAG_CONDITIONAL_ON_FLOW indicates that a context needs to be associated with the stream for the callout to be called. Its associated filter indicates that it will let the traffic pass if the callout ever gets unregistered and the callout can either block or permit the processing of the stream. It is really working in accord with the FWPM_LAYER_ALE_FLOW_ESTABLISHED_V4 layer.

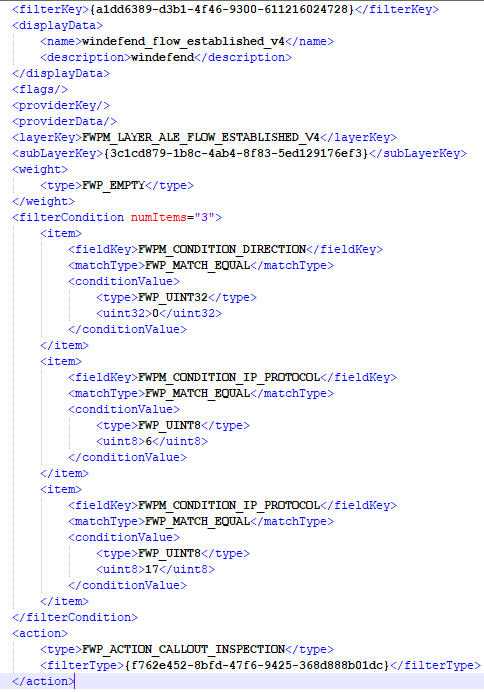

The MSDN indicates that this layer is used to notify when a TCP connection is established. The callout will be responsible for creating a “user-defined” FLOW_CONTEXT structure which is necessary for the stream layer callout to be called. The associated filter has the following conditions that needs to be fulfilled for the callout to be triggered:

the direction of the flow needs to be outbound (FWP_DIRECTION_OUTBOUND);

the IP protocol needs to be either TCP or UDP;

the callout can only inspect the packets, not terminate the traffic (FWP_ACTION_CALLOUT_INSPECTION).

To sum up the flow of an IPv4 packet inside the network inspection driver, when a connection is established, it will go through the FWPM_LAYER_ALE_FLOW_ESTABLISHED_V4 layer. Then the packet will pass through the stream/datagram layer filters if a flow context has been created by the precedent layer. When closing the connection, the packet goes through the same layers and the flow context gets deleted. At each layer a set of callbacks registered with the callout will be executed.

Callouts

The registration of a callout is achieved via a call to FwpsCalloutRegister2 which takes a FWPS_CALLOUT2_ structure as an argument. It is composed of a GUID that would be associated with a filter and three different callbacks: notify, classify and delete. The first one is not used by the filtering driver. Continuing with the IPv4 packet flow, the driver registers only a classify function for the FWPM_LAYER_ALE_FLOW_ESTABLISHED_V4. It has the following prototype:

void FWPS_CALLOUT_CLASSIFY_FN2(

const FWPS_INCOMING_VALUES0 *inFixedValues,

const FWPS_INCOMING_METADATA_VALUES0 *inMetaValues,

void *layerData,

const void *classifyContext,

const FWPS_FILTER2 *filter,

UINT64 flowContext,

FWPS_CLASSIFY_OUT0 *classifyOut

)

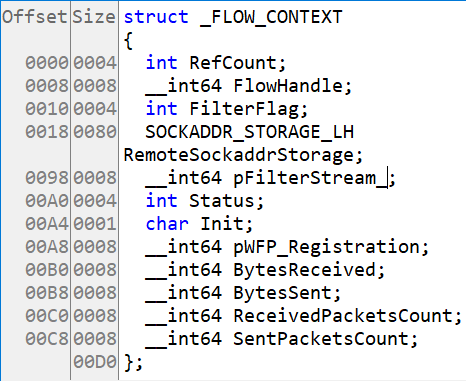

As stated previously, the function is responsible for creating the flow context that will be associated with any exchanged packets during the communication between the two endpoints. It uses its different arguments to fill in the following structure.

The callout for the FWPM_LAYER_STREAM_V4 layer registers the classify and delete functions. The classify function’s main goal is to determine if the packet should be dropped or not. It does so by looking at the FilterFlag member of the FLOW_CONTEXT structure. This flag is based on the current configuration of the network protection. The delete function’s main goal is to release, free and delete everything that was associated with the connection such as the FLOW_CONTEXT.

A very important point is that each one of these functions creates a notification for the userland service WdNisSvc to handle. This process, backed by the NisSrv.exe executable, is the one responsible for analysing the network flow from the received notifications. According to an old symbol file (PDB are not available anymore) and a quick analysis, it comprises all sorts of parsers (HTTP, json…).

Notifications

Basically, the userland service sends a specific IOCTL to the WdNisDrv driver to request a connection notification. The driver uses a Cancel-Safe IRP queue to keep track of requests and complete them when a callout is called. A connection notification begins with a header and is followed by a union depending on the type of notification.

typedef struct {

unsigned long long CreationTime;

unsigned long long NotificationType;

} _CONNECTION_NOTIFICATION_HEADER;

typedef struct {

_CONNECTION_NOTIFICATION_HEADER Header;

union {

_FLOW_NOTIFICATION FlowNotification;

_STREAM_DATA_NOTIFICATION StreamDataNotification;

_ERROR_NOTIFICATION ErrorNotification;

_FLOW_DELETE_NOTIFICATION FlowDeleteNotification;

};

} _CONNECTION_NOTIFICATION;

For instance, when a connection is established, the classify function for that layer creates the following notification (the process path is appended to the structure):

typedef struct {

unsigned long long FlowHandle;

unsigned short Layer;

unsigned int CalloutId;

unsigned int IpProtocol;

unsigned char FilterFlag;

union {

SOCKADDR_IN IPv4;

SOCKADDR_IN6 IPv6;

SOCKADDR_STORAGE_LH IPvX;

} LocalAddress;

union {

SOCKADDR_IN IPv4;

SOCKADDR_IN6 IPv6;

SOCKADDR_STORAGE_LH IPvX;

} RemoteAddress;

unsigned int ProcessId;

unsigned long long ProcessCreationTime;

unsigned char IsProcessExcluded;

unsigned int ProcessPathLength;

} _FLOW_NOTIFICATION;

The stream data notification is simpler. Its most important field is the data itself, which is appended to the notification, and the StreamFlags member, that indicates if the packet is outbound or inbound and other flags (PSH, URG).

typedef struct {

unsigned long long PktExchanged;

unsigned long long FlowHandle;

unsigned short Layer;

unsigned int CalloutId;

unsigned short StreamFlags;

unsigned short IsStreamOutbound;

unsigned int StreamSize;

} _STREAM_DATA_NOTIFICATION;

As such, before any data is sent, the service knows which program established a connection and to what IP address and port it communicates with. Then it can keep track of sent and received packets on that layer.

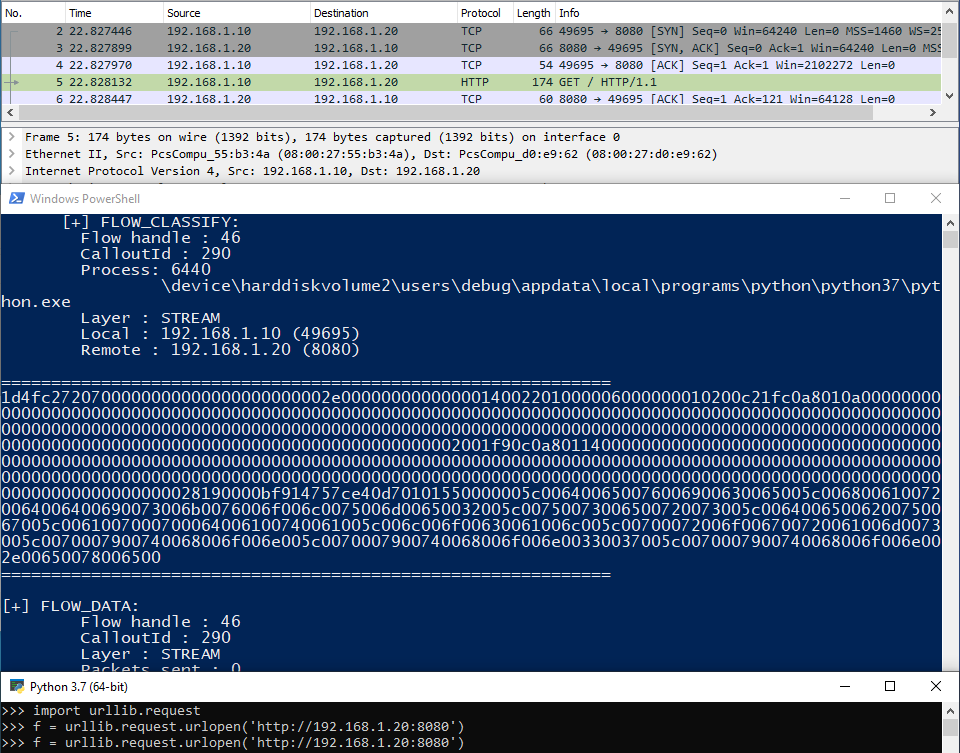

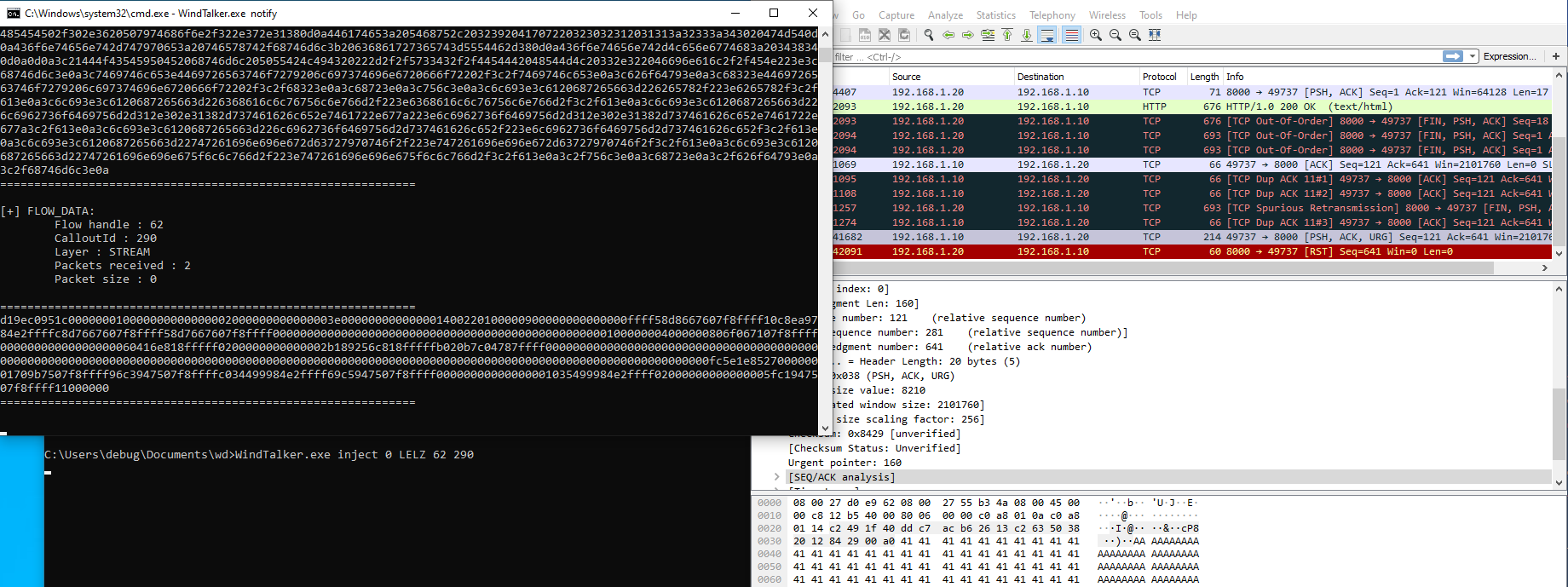

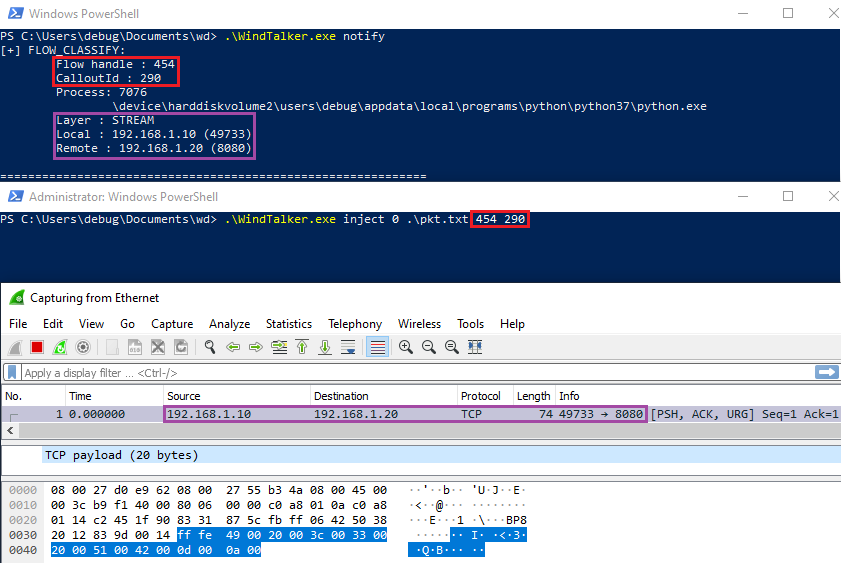

I developed a small utility that allows to see these notifications in real time.

Having gathered the different information on the filters, the callouts and notifications, it is then possible to understand how the inspection driver retrieves network information and what kind of information it grabs to follow the flow of data. The following table sums up all the different callouts, filters, conditions and flags.

Configuration and extra-features

Set/Get/Add-MpPreference

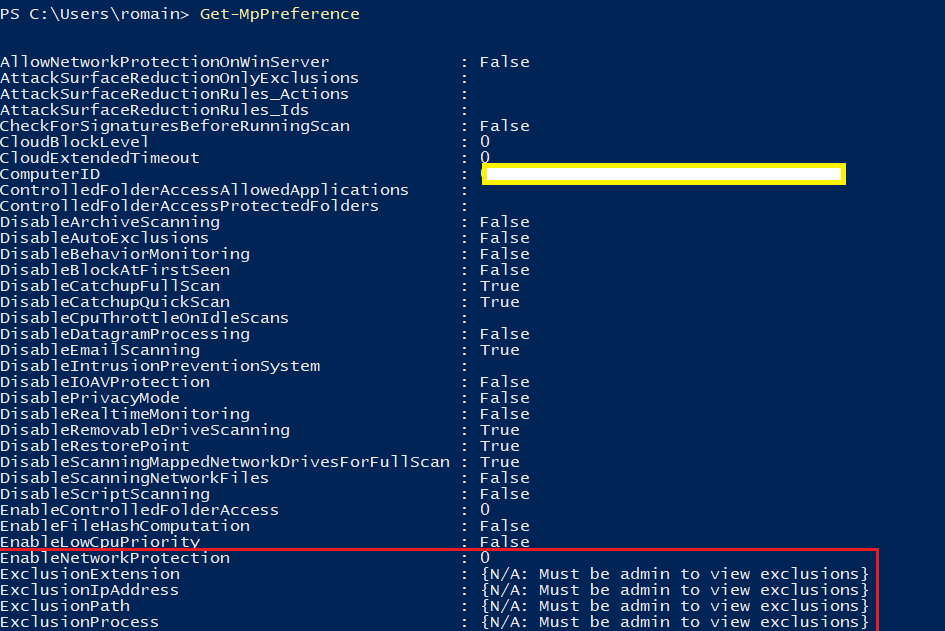

When configuring Windows Defender through the “Windows Security” application, the possibilities exposed are very limited. However, it is possible to use the Defender PowerShell module to have access to more configuration options (filtering level, exclusions…). The module is described by the ProtectionManagement.mof and implemented in the dll with the same name. Get-MpPreference is used to retrieve the current configuration and Add-MpPreference and Set-MpPreference to set it.

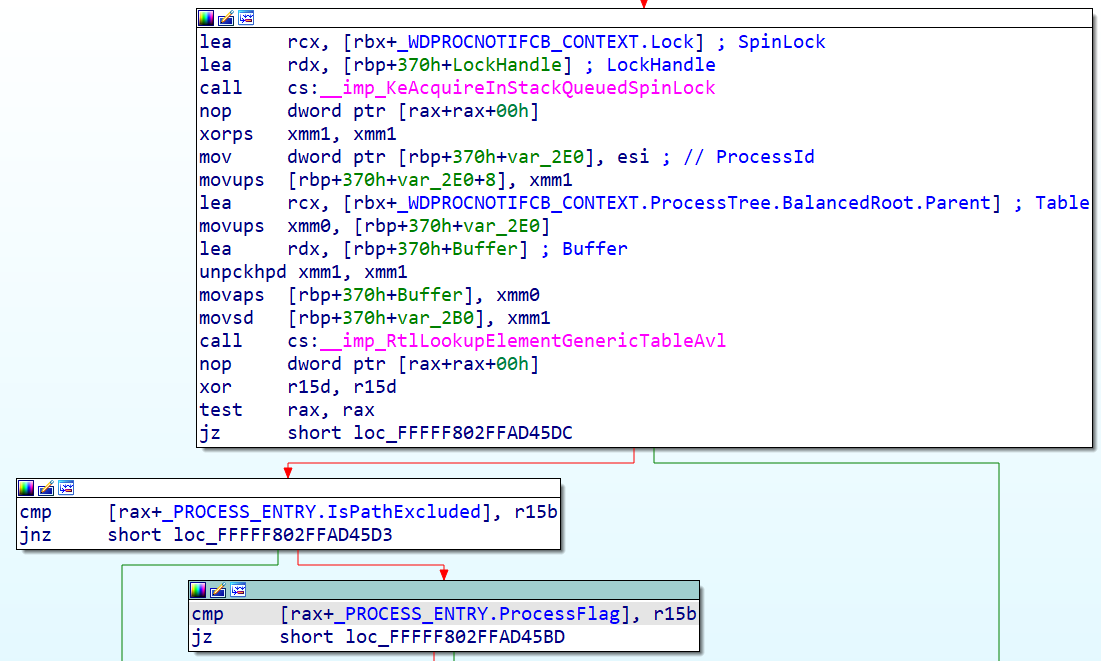

As one can see, there are four kinds of exclusion, but the most interesting one is the ExclusionIpAddress since this option is not available in the GUI. Essentially, when modifying the configuration, it will result in an IOCTL sent to the driver. For instance, when excluding an IP address, a list of SOCKADDR_STORAGE structures is passed to the driver and is added to an AVL tree; then, when establishing a connection, the destination address will be checked against the tree. The same goes for the processes, however the scheme is a little bit different as to provide more flexibility. During its initialization, the WdNisDrv driver registers a callback which will be “notified” by the WdFilter driver through \CallbackWdProcessNotificationCallback. That other driver implements a process notification callback via PsSetCreateProcessNotifyRoutine. An AVL tree is also created and each process notification will update the tree whether a new process is created, terminated or the state of the exclusion changed. When establishing a connection, the process ID will be checked against that tree.

The module offers the possibility to configure the network protection level via the EnableNetworkProtection parameter with 3 different levels. It has the following enumeration: Disabled, Enabled, AuditMode. Enabled blocks IP addresses and domains consided malicious, while AuditMode doesn’t block, but simply creates windows events related to connections that would have been blocked. The log name is “Microsoft-Windows-Windows Defender/Operational” and the interesting events are 1125 and 1126. They show the destination that was reached and the program which accessed it. Both modes produce connection notifications for the userland service to parse.

Associated IOCTLs

As explained, the driver can be configured through the use of I/O control codes. Here’s the list of IOCTL and their function:

0x226005 : set the filtering state

0x226009 : set process exception (list of executable paths)

0x226011 : inject a stream or datagram in the stack

0x226015 : set IP address exclusion

0x22A00E : request a connection notification

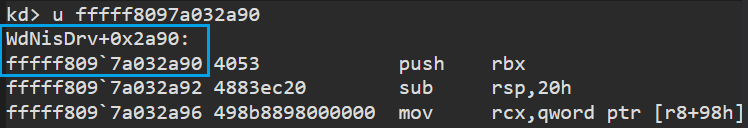

As one can see, an IOCTL allows the injection of a packet or datagram directly into the network stack. For example, if some data needs to be injected inside the stream layer, a connection should be open and its FlowHandle retrieved. This value along with the data and the calloutid of the stream layer are passed via the input buffer of the IRP and used to inject the packet via a call to FwpsStreamInjectAsync0. The following image illustrates a packet injection inside an open connection by the tool I developed. No stream notifications are passed to the userland service.

The same can be achieved with the datagram layer via a call to FwpsInjectTransportSendAsync0. The purpose of this ioctl is not clear but it certainly holds a great feature.

Quick overview on security

An ACL away from mayhem

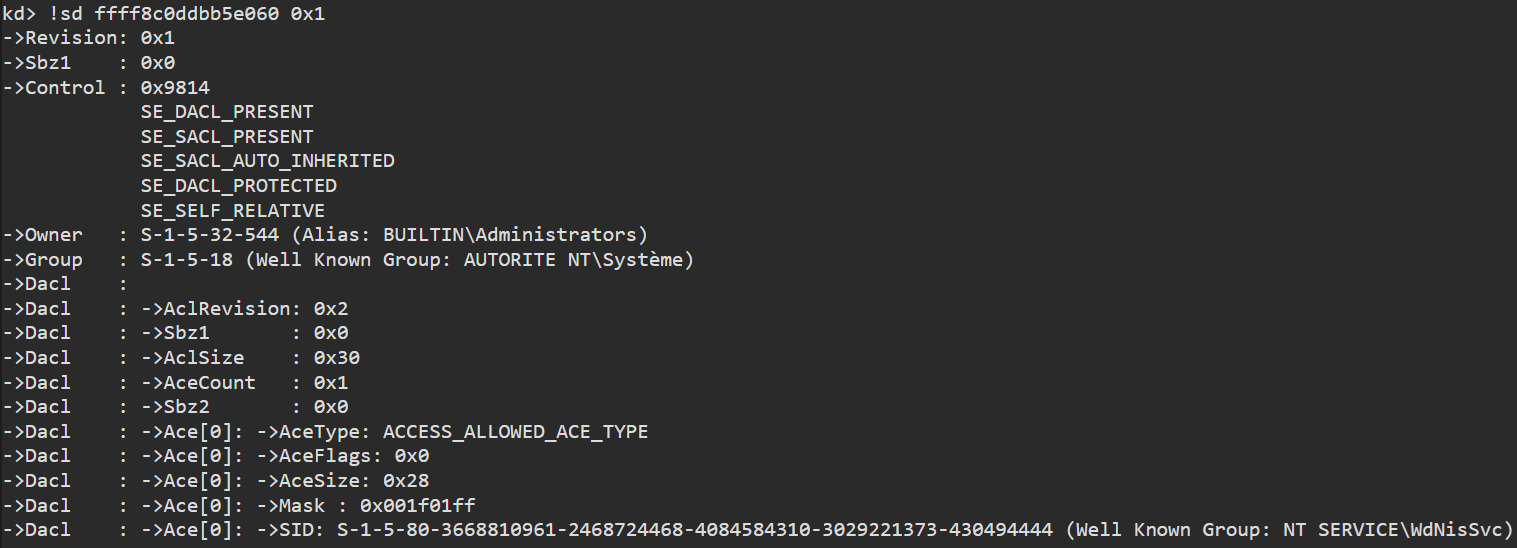

Early in the initialization process, the driver calls the WdmlibIoCreateDeviceSecure routine to create a device object. It sets the DO_EXCLUSIVE bit on the _DEVICE_OBJECT flag member. Later, it proceeds to apply a security descriptor on the device object.

unpack('<IIIII', SHA1.new("WDNISSVC".encode('utf-16le')).digest())

(3668810961, 2468724468, 4084584310, 3029221373, 430494444)

The security descriptor basically states that only the WdNisSvc service can open a handle on the device object.

In order to play with the different IOCTLs, a script has been developed which essentially removes the opening exclusivity on the device and only keeps the SE_SELF_RELATIVE flag inside the control member of the security descriptor. This will effectively remove any protection on the device. However, due to the exclusivity character of the device, whenever an IRP_MJ_CLEANUP is received (most likely via a call to CloseHandle), the driver stops filtering.

The following security bugs I found can NOT be reached without deactivating the aforementioned mechanisms. In order to reproduce the following points, a kernel debugging session must be created with Windbg Preview. Then, when the driver is loaded and initialized, the script can be executed to remove the protections.

dx Debugger.State.Scripts.mod_sec.Contents.open_dev()*

Integer overflow in IP address exclusion

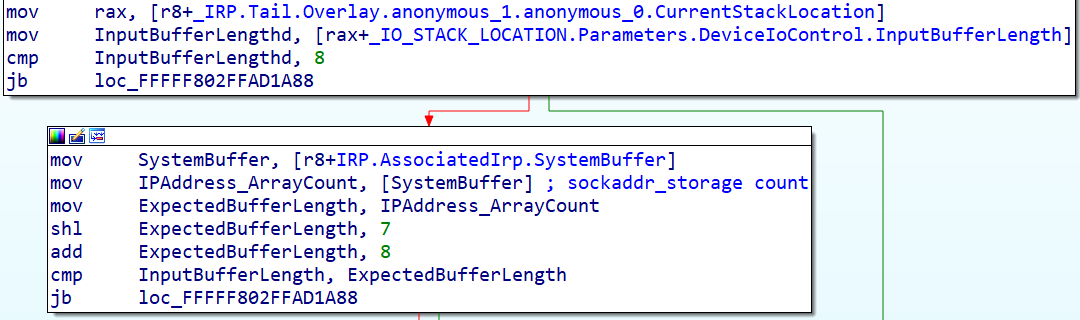

The function handling the IP address exclusion IOCTL expects a QWORD as a count integer followed by an array of SOCKADDR_STORAGE describing the IP addresses.

The amount of needed bytes is computed and checked like this: (_IO_STACK_LOCATION.Parameters.DeviceIoControl.InputBufferLength < count * sizeof(sockaddr_storage) + sizeof(count)).

Since the overflow is not taken into account, if we set at least one of the 7 most significant bits of the count variable, we can overflow and validate the check. However, the count is still used in the loop as the number of SOCKADDR_STORAGE to insert. This will cause a BSOD when the code tries to access a non-mapped memory page.

Out-Of-Bound read in datagram injection

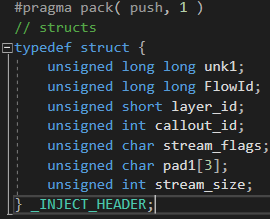

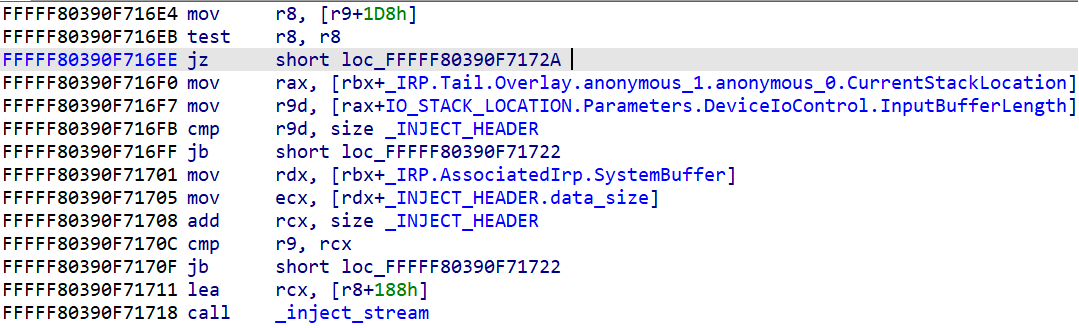

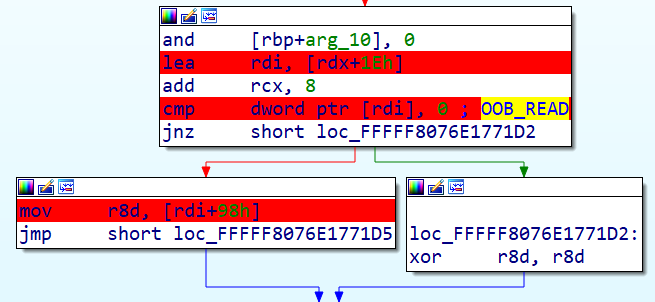

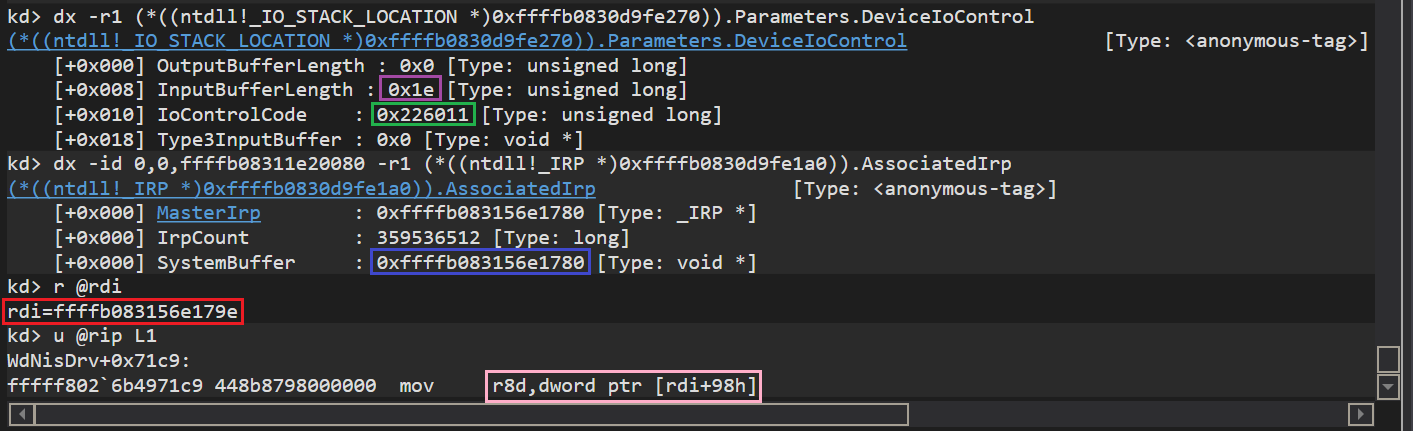

The function handling IOCTLs does some preliminary checks on the input buffer to check if the length is correct. The buffer contains an “injection header”.

First, it checks that the input buffer is big enough to hold the header structure, which is 30-bytes long. Then it adds the size of the header to a size specified inside the header, and checks if the size of the input buffer is equal to or above the result. However, it is possible to set this size field to zero and pass the check. In that case, when the stream injection function is called, two accesses are made that will cause an out-of-bound read: one right after the injection header, offset 0x1E, and the second one at offset 0xB6.

While debugging, the bug can be observed by looking at the input buffer length and the access being made.

It’s very unlikely that the first and even the second out-of-bound read will cause a BSOD. The first value is used to determine if the packet contains some additional data while the second one is used to tell the size of it. However, there doesn’t seem to be a case where these values could lead to another bug.

Stack leakage via connection notifications

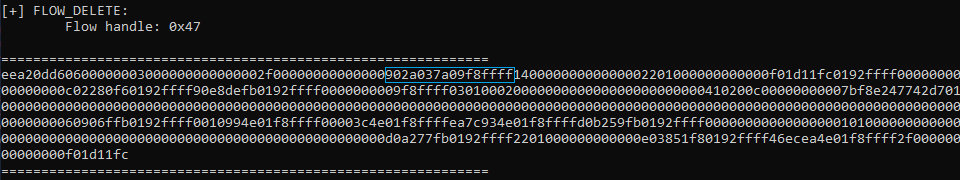

Analyzing the creation of connection notifications revealed that they are made from a header specifying its type followed by a union based on it. This gives a 134-bytes long structure. When creating a notification, the driver uses a temporary notification structure on the stack. It then dynamically allocates a chunk of memory and initialize it with zeroes. However, it copies the temporary structure from the stack in the allocated memory using XMM registers. For instance, the _FLOW_DELETE_NOTIFICATION only indicates the flow handle that is being deleted, so the connection notification is 24-bytes long. Because of the union structure, 134 bytes are allocated and copied. In other words, 110 extra bytes are copied (and leaked) from the stack to the buffer sent to userland. On top of that, when completing the IRP, the driver specifies a size of 134. The two following images show the utility displaying the delete connection notification; the light blue rectangle is an address on the stack that is being leaked, which corresponds to the actual stream deletion callback.

Testing the bugs

To assess if the bugs can actually be triggered, I'm sharing a small utility along with a WinDbg Preview script allowing to open a handle to the device. The tool can be found here .

After having executed the script as previously described, the tool can be launched with some options:

inject: injects a packet from a file into an open connection

notify: displays live connection notifications

bsod: triggers the integer overflow via the IP address exclusion bug

ipexclu: randomly generates an IPv4 address to exclude (testing purposes only)

Most of them are pretty self-explanatory, but the inject and notify commands need a few extra steps. In both cases, the EnableNetworkProtection option must be set to 1 or 2, which can be achieved by issuing the following PowerShell command with administrator rights.

Set-MpPreference -EnableNetworkProtection 1

Then the utility can be run with the notify command. When opening Edge browser for example, a long list of notifications should be displayed. If it does not work: terminate the tool, change the level of network protection to 1 or 2 and run the tool again.

One has to keep in mind that when terminating the utility, the filters are unregistered. Since the driver was not implemented to be queried by multiple processes, its cleanup major function (called when the handle is closed by the userland process) unregisters the callbacks by calling FwpsCalloutUnregisterById0.

When testing packet injection inside a connection, one will need to retrieve its flow handle and the filter engine callout ID. The second one can be retrieved via the XML file or just like the first one via the notify command of the utility.

For instance, let’s take the scenario where a debuggee machine A wants to make a request to an HTTP server B. After having setup the notify command, A can start querying B. The flow handle can be retrieved inside the flow established connection notification. One should leave the notify command running, and open another terminal and use the inject command with the retrieved parameters.

Disclosure Timeline

May 28, 2021: Bugs reported to MSRC (Microsoft Security Response Center).

June 1, 2021: A case is opened by MSRC.

June 21, 2021: MSRC acknowledged the findings but it doesn’t meet the bar for a security update release. The case is closed.

Conclusion

Digging into the internals of an AV and observing how it uses the system to gather information is really interesting both from an offensive and defensive point of view. It can help to tweak the mechanisms in place and gives more insight into what’s really happening in the background. Sadly, the bugs I found can not be triggered due to the DACL on the device object, but it was a great code analysis exercise.